AI today operates at a scale and intensity that traditional infrastructure was never designed to handle. Training large models, running continuous inference, and processing massive datasets in real time demand a fundamentally different kind of backbone. This is where AI data centers come in – engineered not as passive hosting environments, but as tightly integrated compute ecosystems. These high-performance data centers are built to sustain extreme GPU density, high-throughput data pipelines, and ultra-low latency communication, forming the core engine that powers modern AI systems.

The Shift from Traditional to AI-First Infrastructure

Conventional data centers were designed for predictable enterprise workloads – applications, databases, and storage. AI, however, introduces a fundamentally different paradigm. Training large language models, running real-time inference, and processing vast datasets require exponentially higher compute power and data movement.

This has led to the rise of specialized AI workloads data center environments that are optimized for parallel processing, ultra-low latency, and high-density compute. These facilities are not just incremental upgrades – they are complete architectural overhauls designed to support AI at scale.

Power Density Transformation: From 10 kW to 100 kW per Rack

One of the most defining shifts in AI data center architecture is the dramatic increase in power density. Traditional data centers typically operated within a range of 5–10 kW per rack. However, the rise of GPU-powered AI workloads has pushed this to nearly 100 kW per rack, fundamentally changing how data centers are designed and operated.

This increase is driven by high-density GPU clusters, where multiple GPUs are packed within a single rack to reduce latency and accelerate processing. Modern GPUs can consume up to 1.2 kW each, meaning that a single processor can account for a significant portion of an entire rack’s power consumption in legacy systems.

This shift toward high-density data centers is not incremental – it is exponential, requiring complete redesigns of cooling systems, power infrastructure, and physical layouts to support next-generation AI workloads.

GPU Data Center Architecture: The Core of AI Compute

At the center of AI infrastructure lies the GPU. Unlike CPUs, which are optimized for sequential processing, GPUs excel at parallel computation – making them ideal for AI model training and inference.

Modern GPU data center architecture integrates thousands of high-performance GPUs into interconnected clusters, often referred to as superclusters. These clusters are capable of handling massive AI workloads, enabling enterprises to train complex models faster and deploy them at scale. The ability to seamlessly scale GPU clusters from a few nodes to thousands – has become a defining feature of high-performance data centers.

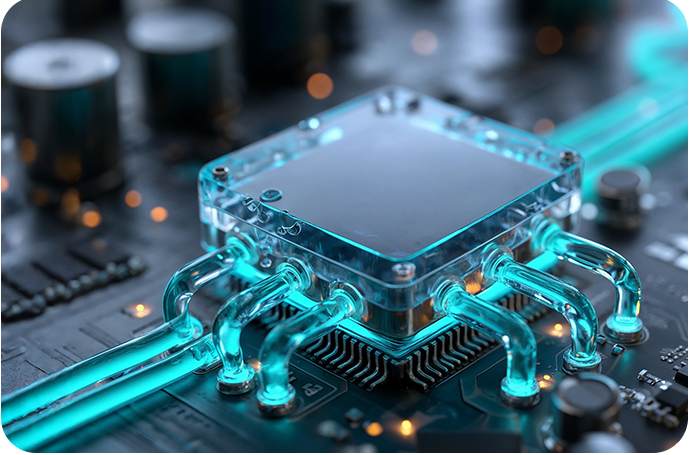

Liquid Cooling in AI Data Centers: Managing Extreme Heat

The rise in power density has made liquid cooling in data centers a necessity rather than an option. Traditional air cooling systems are no longer sufficient to manage the heat generated by high-density GPU clusters.

Liquid cooling technologies use water-based or refrigerant-based systems to efficiently dissipate heat, enabling AI data centers to sustain workloads operating at 100 kW per rack. Due to its superior thermal conductivity, liquid cooling allows data centers to operate at higher efficiency levels while maintaining optimal hardware performance.

However, modern AI data center cooling systems are hybrid in nature. Even the most advanced deployments maintain a combination of liquid and air cooling, often in an 80/20 ratio. While GPUs are primarily liquid-cooled, supporting infrastructure such as storage and networking components may still rely on air cooling. This hybrid approach ensures flexibility while supporting diverse AI workloads within the same environment.

High-Speed Interconnects: Eliminating Bottlenecks

In AI environments, raw compute capacity is only as effective as the fabric that binds it. Large-scale model training is inherently distributed – spanning hundreds or thousands of GPUs that must operate as a single logical system, continuously exchanging gradients, parameters, and intermediate states. This makes the interconnect layer a critical determinant of performance. Ultra-low latency, high-bandwidth networking fabrics – built on technologies like advanced InfiniBand and GPU-to-GPU interconnects – enable tightly synchronized compute, minimizing communication overhead and idle cycles. The result is a near-linear scaling of performance, where adding more GPUs translates directly into faster training cycles.

Storage Built for AI Throughput

AI workloads are driven as much by data movement as by compute, requiring storage systems that can match GPU speed and scale. From ingesting massive datasets to sustaining continuous training pipelines, performance must remain consistent under load. NVMe-based architectures deliver ultra-low latency and high IOPS, ensuring data is always available without bottlenecks. In AI data centers, storage is tightly integrated with compute, keeping pipelines saturated and GPUs fully utilized, ultimately accelerating training cycles and overall system efficiency.

Water Usage and Cooling Trade-Offs in AI Infrastructure

The impact of AI data centers on water consumption is another important aspect of sustainability. While liquid cooling is widely used, it typically operates within closed-loop systems that do not significantly increase water usage.

At the facility level, cooling methods such as evaporative cooling and dry cooling present trade-offs between water and energy consumption. Operators must carefully balance these factors based on regional conditions, particularly in water-sensitive areas.

As AI infrastructure evolves, improvements in cooling efficiency may reduce reliance on water-intensive systems, further enhancing the sustainability of AI-ready data centers.

Powering AI at Hyperscale

AI training workloads run as long-duration, compute-intensive cycles where even brief interruptions can reset progress and incur significant cost. Power infrastructure, therefore, becomes a critical layer of resilience not just support. AI data centers are engineered with hyperscale power architectures, combining redundant utility feeds, advanced UPS systems, and distributed backup to ensure uninterrupted execution. This guarantees consistent uptime, enabling high-performance data centers to sustain continuous AI workloads without disruption.

Security and Sovereignty in AI Infrastructure

As AI becomes integral to national and enterprise operations, data security and sovereignty have taken center stage. Sensitive datasets, proprietary models, and critical applications must be protected at all levels.

A data center in India, for instance, must address regulatory requirements around data residency and governance. Sovereign AI infrastructure ensures that data remains within national borders while complying with local regulations. Combined with multi-layer security – from biometric access controls to 24×7 monitoring – these facilities provide a secure foundation for AI innovation.

The Indian Context: Building for Scale and Sovereignty

India is witnessing a surge in AI adoption across sectors, driving demand for advanced infrastructure. The emergence of AI data centers in the country reflects a broader shift toward self-reliance in digital capabilities.

A modern data center in India is no longer just a hosting facility it is a strategic asset that enables enterprises, startups, and government bodies to build, train, and deploy AI solutions at scale. With increasing focus on sovereign AI, the role of localized, high-performance infrastructure is becoming even more critical.

Yotta Data Center: Powering India’s AI Ambitions

At the forefront of this transformation is Yotta, which operates one of the most advanced AI-ready infrastructures in the country. Yotta’s AI ecosystem is powered by GPU-driven superclusters, with thousands of NVIDIA H100 GPUs already operational, complemented by L40S GPUs for graphics-intensive workloads and upcoming next-gen Blackwell GPUs to support future AI models. This robust GPU data center architecture is enhanced by ultra-low latency networking, enabling seamless distributed training at scale. High-speed NVMe-based storage ensures that data-intensive AI applications – from large language models to real-time analytics – run without bottlenecks.

Through Yotta’s AI Cloud – Shakti Cloud, businesses can tap into AI workloads capabilities on demand – without needing to build or manage their own infrastructure. It combines high-density GPU clusters, ultra-fast networking, and high-performance storage into a unified AI platform, purpose-built for training, fine-tuning, and deploying AI models at scale.

Shakti Cloud operates within India’s borders, aligning with data sovereignty and compliance requirements while delivering global-scale AI performance. Together, Yotta’s underlying infrastructure and Shakti Cloud offering create a tightly integrated ecosystem – positioning it as a key enabler of India’s rapidly evolving AI landscape.